Out of shared memory error while dropping fhir schema using ibm cloud databases for postgresql · Issue #1631 · LinuxForHealth/FHIR · GitHub

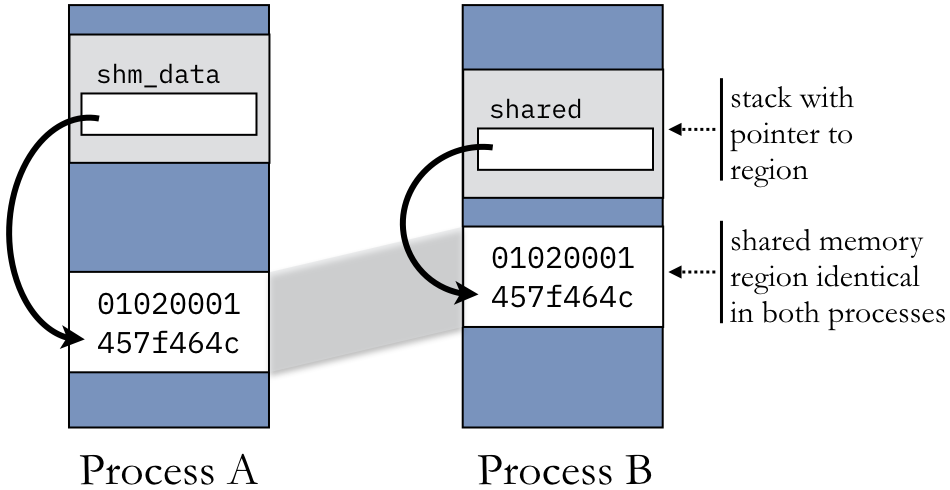

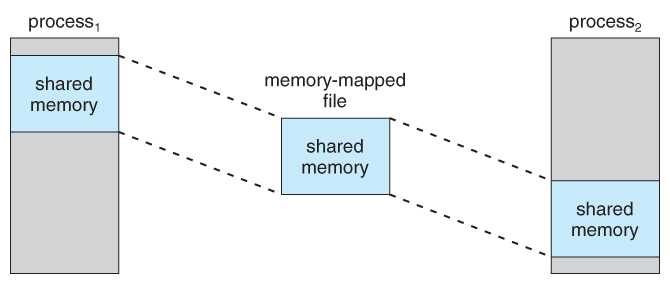

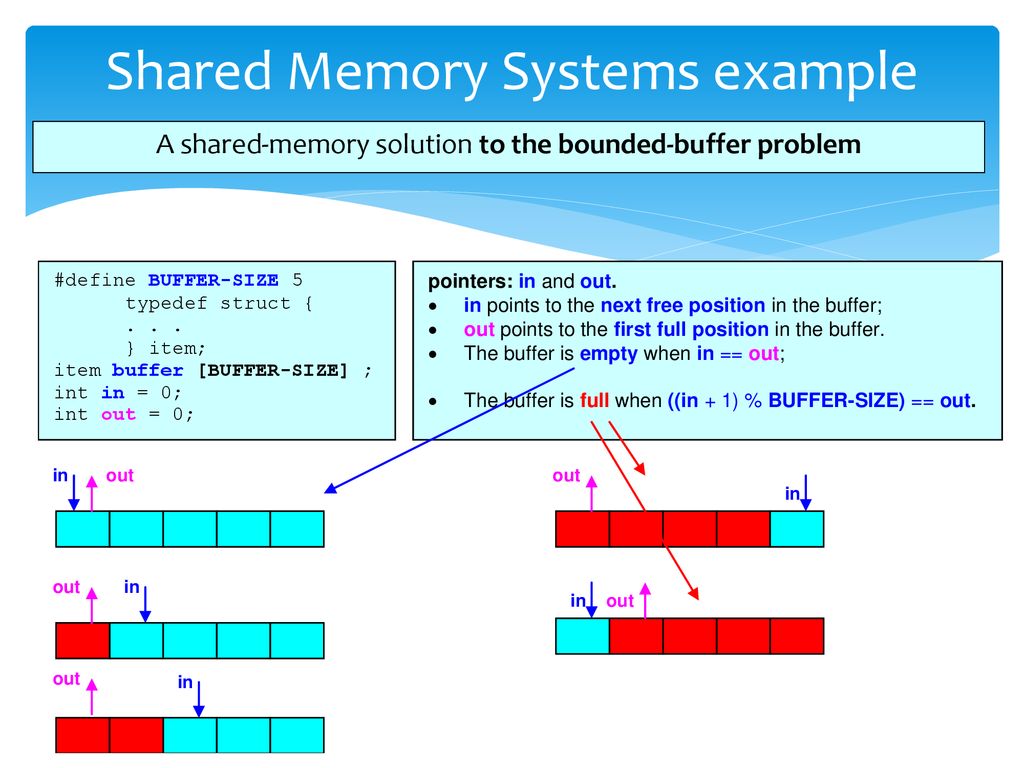

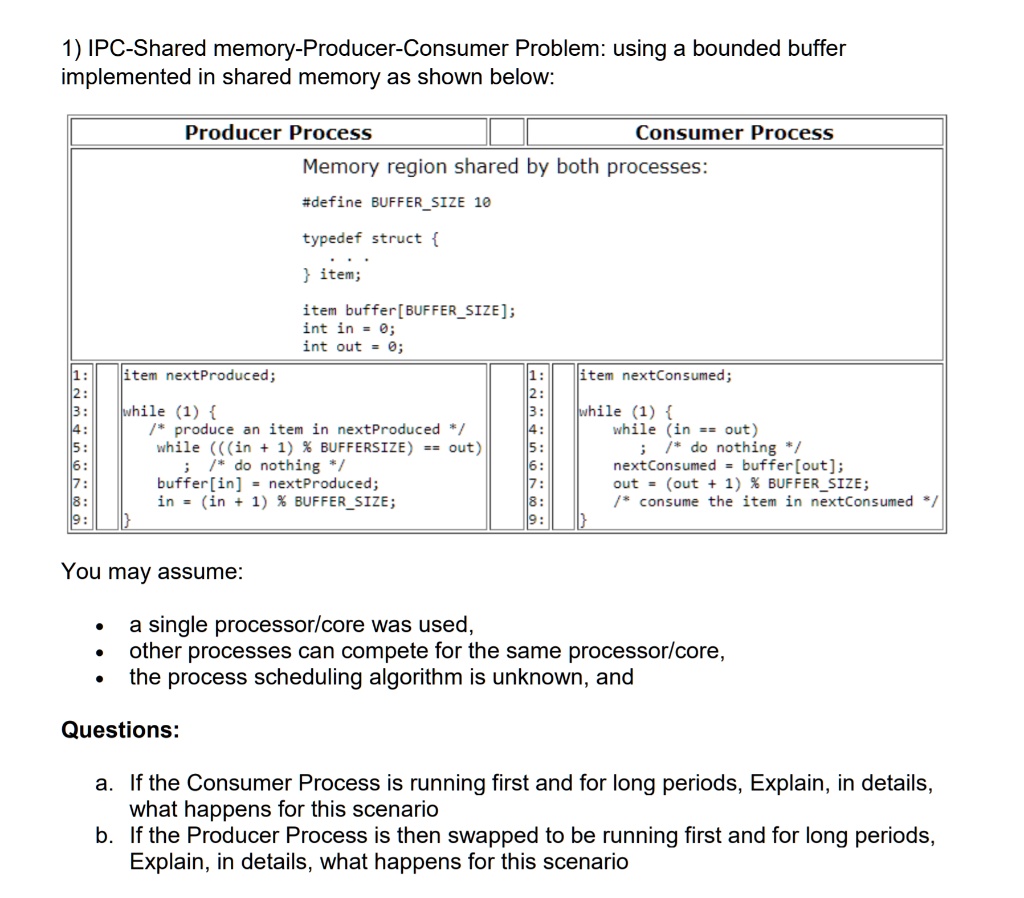

SOLVED: IPC-Shared memory-Producer-Consumer Problem: using a bounded buffer implemented in shared memory as shown below: Producer Process Consumer Process Memory region shared by both processes: #define BUFFERSIZE 10 typedef struct item; item

WARNING: out of shared memory (In docker container) during COUNT(*) · Issue #796 · timescale/timescaledb · GitHub

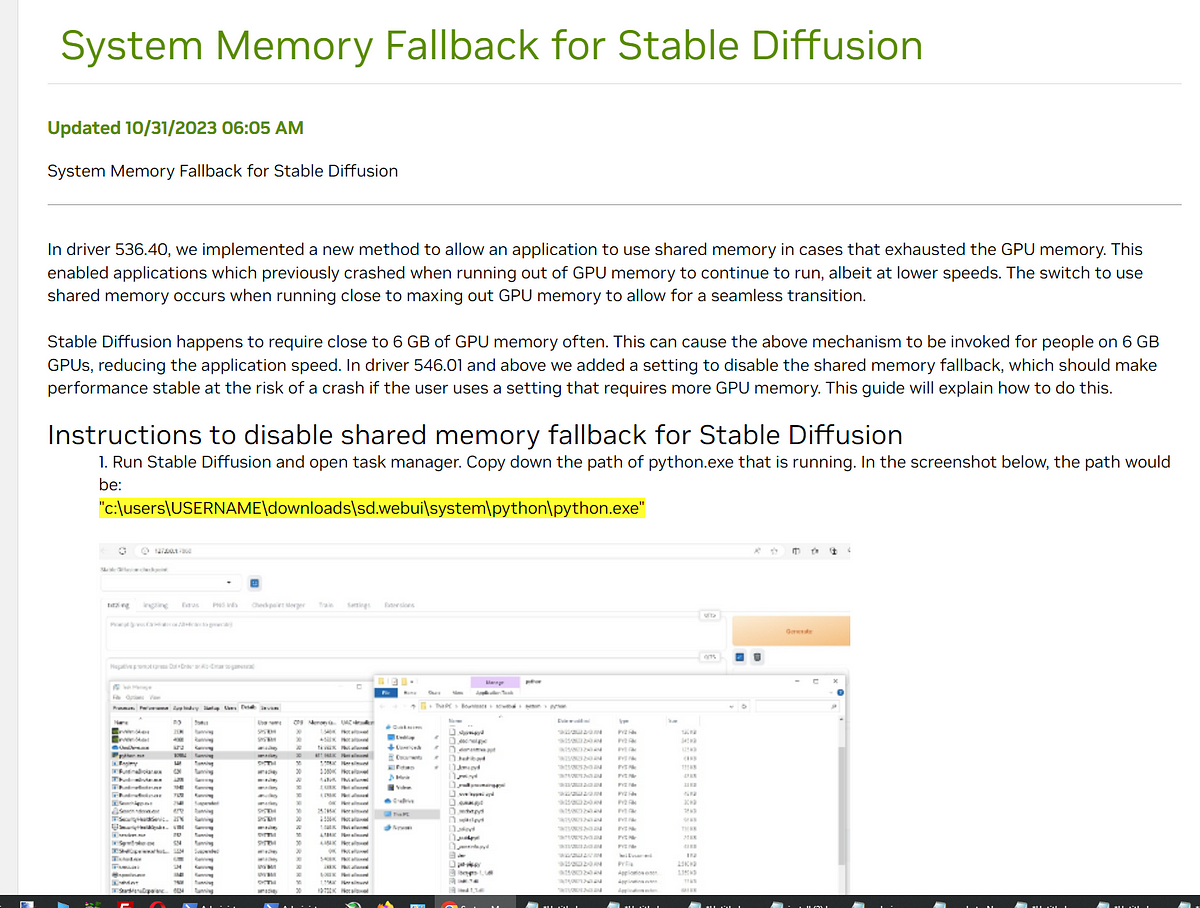

Get Huge SDXL Inference Speed Boost With Disabling Shared VRAM — Tested With 8 GB VRAM GPU | by Furkan Gözükara - PhD Computer Engineer, SECourses | Medium